I’ve been building a project I intend to open source. Nothing unusual about that. Plenty of us spend evenings and weekends writing software we plan to give away. It’s how open source has always worked: you donate your time, your expertise, and your Saturday mornings.

But when I upgraded from Claude Pro to Max, something clicked. I’m not just donating my time any more. I’m donating my tokens.

AI coding assistants are a fairly heavy part of my workflow these days, and they’re not free. There’s a monthly subscription for the privilege. And when that work is destined to become an open source project, that’s actual money going toward building something to give away. That’s a shift. A subtle one, but I think it’s a fundamental change in the economics of open source contribution that nobody seems to be talking about.

The cost of contributing just changed

Open source sustainability has been a conversation for years. It tends to focus on maintainer burnout, funding models like GitHub Sponsors and Open Collective, and the question of how we support the people who keep critical infrastructure running. All important stuff. But nearly all of it is framed around time: maintainers don’t have enough of it, contributors donate it for free, and the whole system runs on goodwill and reputation.

Nobody seems to be discussing the fact that contributing now costs money in a way it didn’t before. If you’re using Cursor, Claude Code, Copilot, or any of the other AI coding tools to work on an open source project, you’re paying a subscription for that. It adds up, especially for sustained work on larger projects.

The cost isn’t prohibitive, but it exists, and that’s the point. Previously, the only input was your time and knowledge. Now there’s a financial dimension layered on top. And I think that changes the calculus for a lot of people, whether they’ve consciously noticed it or not.

Thinking about idle capacity

This realisation got me thinking about efficiency. Not in the abstract, but in the quite practical sense of: my Claude Code subscription is only actively being used for a portion of the day. Asleep, at dinner, doing something else, that capacity sits idle.

For my own projects, I started looking at tools like Steve Yegge’s Gas Town, a multi-agent orchestrator that coordinates multiple Claude Code instances working on different tasks. The idea was simple enough: if I could have agents picking up work while I wasn’t at the keyboard, I’d get more value from the subscription I’m already paying for.

But then the obvious next thought arrived. If I can make my idle capacity work for my own projects, could I make it work for the community too? Could someone else’s open source project feed tasks to my agents overnight, using my subscription, while I’m doing nothing with it?

We’ve done this before

The idea of pooling idle resources across a distributed network isn’t new. We’ve been here several times before, each with different incentive structures.

SETI@home and Folding@home let people donate idle CPU cycles to scientific research. The model was purely altruistic: you got a screensaver and maybe a spot on a leaderboard. Bitcoin mining took the same basic concept of donating compute power and made it transactional: contribute processing capacity, receive tokens with monetary value. Napster and the peer-to-peer file sharing era showed a different model again, where everyone contributed bandwidth and storage and the reward was access to everyone else’s library. There were browser-based distributed computing plugins too, which let you contribute processing power in the background while you browsed the web.

The common thread across all of these is idle capacity being put to collective use. What varies is the incentive model: altruism, financial reward, or mutual access.

What’s different now is the nature of what you’re donating. Rather than raw compute cycles or storage or bandwidth, you’re donating inference capacity: access to an AI model’s ability to reason about and produce code, which is a qualitatively different kind of resource. And unlike CPU cycles, which were essentially unlimited (your computer was on anyway), inference capacity on a subscription model comes with weekly limits.

The trading desk analogy

The analogy that kept coming back to me was trading desks. In financial markets, there are mechanisms that allow entities to trade with leverage outside of core hours. The capacity exists around the clock, but usage is concentrated during business hours for any given time zone. So structures emerge to let others use that capacity when you’re not.

The same principle applies here. If you’re paying for a flat-rate AI coding subscription, you’ve got capacity that sits idle for roughly two-thirds of the day. Not because you don’t want to use it, but because you’re asleep, or at work, or living your life. What I was imagining was some kind of integration, probably with something like Gas Town, where an open source project could publish agent-suitable tasks and your local instance could pick them up during your off-hours. No sharing of API keys or account access. Your agents would pull tasks from a feed, process them using your subscription, and submit the results as draft PRs for the project maintainer to review.

The flat-rate subscription model is what makes this viable where earlier ideas about sharing API credits weren’t. With a flat-rate plan, you’re time-sharing capacity that would otherwise go unused.

The broader ecosystem seems to be heading this direction too. Claude Code has been developing its own Agent Teams feature for coordinating multiple instances. OpenAI’s Codex app is building out cloud-based automations that can run when your computer is closed. The pattern of agents working unattended is emerging across every major platform.

Then the Wasteland arrived

While I was noodling on all this, Steve Yegge went and built it.

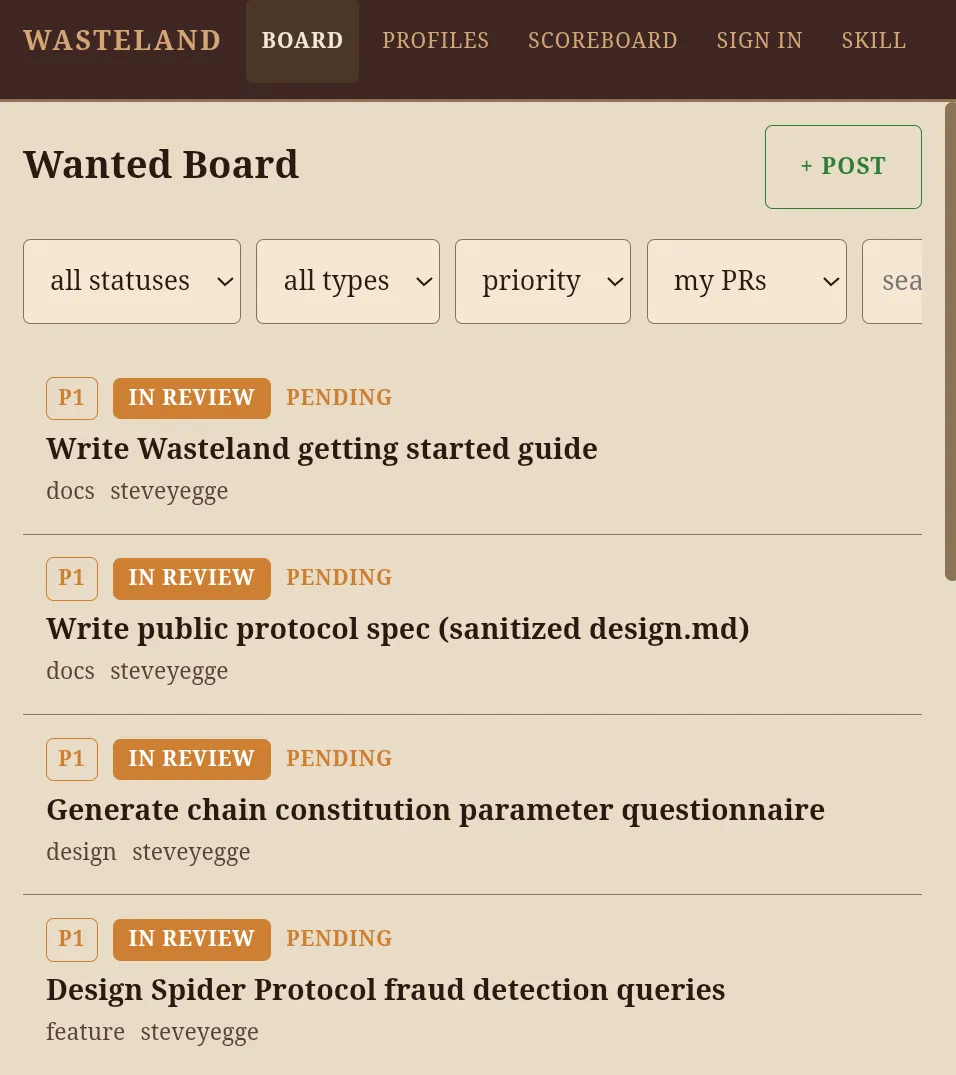

He announced the Wasteland this week, and it’s remarkably close to what I’d been imagining, only far more developed. The Wasteland federates Gas Town instances together into a trust network. At the heart of it is a shared Wanted Board where people post work, and others use their Gas Towns to build it, earning credit for what they do.

The architecture has three kinds of actors: rigs (your agent setup, which rolls up to a human participant), posters (who put work on the board), and validators (who attest to the quality of completed work). These aren’t fixed roles. Any rig can post work, and any rig with sufficient trust can validate. The lifecycle of a task follows Git’s familiar fork/merge model: open, claimed, in review, completed. The whole thing runs on Dolt, a SQL database with Git semantics, which enables the federation of structured data across participants.

You don’t even need Gas Town to participate. All you need is Dolt, a free DoltHub account, and a coding agent that knows the schema.

Recognition that actually means something

What caught my attention most was the reputation system, because open source has always run partly on recognition. Your GitHub contribution graph, your name in the commit history, your standing in a project’s community. These things matter. They’re a big part of why people contribute in the first place.

The Wasteland formalises this with what Yegge calls “stamps.” When a validator accepts your work, they stamp your record. But it’s not a simple pass/fail. Stamps are multi-dimensional attestations covering quality, reliability, and creativity, each scored independently. They include a confidence level and a severity rating. Over time, these accumulate into a structured, evidence-backed work history that’s auditable (anyone can trace it back through the chain to the original work) and portable across different Wastelands.

There’s a trust ladder too. You start as a basic participant, able to browse, claim, and submit. As stamps accumulate, you progress to contributor, then maintainer. Maintainers can validate others’ work, which creates a natural apprenticeship path. And there’s what Yegge calls the “yearbook rule”: you can’t stamp your own work. Your reputation is built entirely from what others say about you, not what you claim about yourself.

It sits in an interesting spot on the incentive spectrum. More structured than SETI@home’s altruistic leaderboard, less mercenary than Bitcoin’s financial rewards. It’s closer to how open source reputation has always worked, just formalised and made portable. The RPG elements (character sheets, leaderboards, skill progression) are a nice touch. They seem to be emerging almost naturally from the underlying system.

The enterprise opportunity

If this model works for individual contributors, it gets even more interesting at the enterprise level.

Companies with team or enterprise subscriptions for AI coding tools have far more idle capacity than any individual. Fifty Claude Code seats aren’t all active simultaneously. Probably not even close. And plenty of companies already invest in open source by paying engineers to contribute to upstream projects they depend on. Directing idle agent capacity toward those same dependencies is a natural extension of that, and it scales better because you don’t need to pull a developer off product work.

The Wasteland’s federation model supports this directly. Anyone can create their own Wasteland for a team, a company, a university, or an open source project. Your rig identity and stamps are portable across them. So a company could set up its own internal Wasteland for prioritised work, while also contributing to the public commons. The reputation earned in one carries over to the other.

The constraint nobody should ignore

There’s one practical issue that needs acknowledging, because it’s the thing that separates this from the earlier distributed computing models.

With SETI@home, donating CPU cycles had zero impact on your own capacity. Your computer was on anyway, and the screensaver wasn’t competing with anything. With AI coding subscriptions, your quota is a depletable resource within a fixed window. Claude Code has weekly usage limits. Donating capacity isn’t free in the way that donating idle CPU cycles was. You’re making a bet that you won’t need those tokens before the reset.

Any tooling built around this needs to be quite smart about it. You’d want reserve thresholds, something like “keep 30% of my weekly quota in reserve, donate the rest if I haven’t used it by Thursday.” In a personal context, the calculation is fairly relaxed: “my quota resets in two days and I’ve got no plans to work this weekend, so let the agents loose on community work.” In a business context, you’d want to be much more conservative. An engineering manager isn’t going to sign off on donating capacity if there’s any risk it leaves the team short on a Monday morning.

This constraint is real, but it’s manageable. Acknowledging it probably makes the whole idea more credible rather than less. It shows we’re dealing with a practical system, not a utopian fantasy.

The contribution model is changing

I arrived at all this from a fairly simple starting point: I noticed I was spending money to build something I planned to give away, and that felt like a new kind of cost that nobody was talking about. That led me to think about idle capacity, which led me to Gas Town, which led me to wonder about federated contribution models. And then the Wasteland showed up and made the speculation concrete.

The underlying observation still stands, though: contributing to open source now costs money as well as time, and the open source sustainability conversation hasn’t caught up to that yet. The Wasteland is one answer, and it’s a pretty compelling one. But regardless of which specific platform or protocol wins out, the economics have shifted. The building blocks are here: multi-agent orchestrators, federated trust networks, portable reputation systems. The contribution model for open source is changing whether we explicitly design for it or not. I’d rather we designed for it.